UC Berkeley shows off accelerated learning that puts robots on their feet in minutes – TechCrunch

Robots relying on AI to learn a new task generally require a laborious and repetitious training process. University of California, Berkeley researchers are attempting to simplify and shorten that with an innovative learning technique that has the robot filling in the gaps rather than starting from scratch.

The team shared several lines of work with TechCrunch to show at TC Sessions: Robotics today and in the video below you can hear about them — first from UC Berkeley researcher Stephen James.

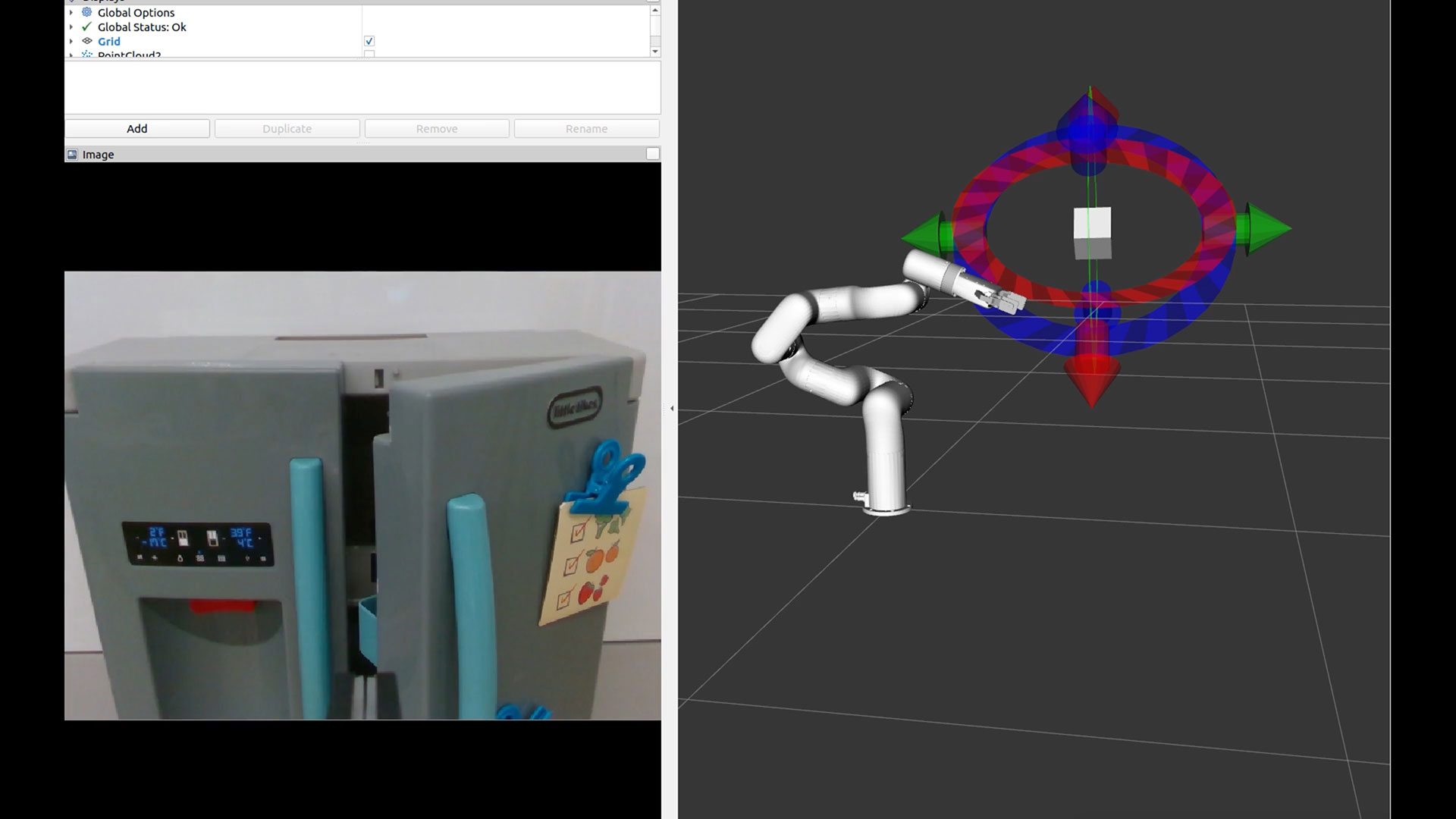

“The technique we’re employing is a kind of contrastive learning setup, where it takes in the YouTube video and it kind of patches out a bunch of areas, and the idea is that the robot is then trying to reconstruct that image,” James explained. “It has to understand what could be in those patches in order to then generate the idea of what could be behind there; it has to get a really good understand of what’s going on in the world.”

Of course it doesn’t learn just from watching YouTube, as common as that is in the human world. The operators have to move the robot itself, either physically or via a VR controller, to give it a general idea of what it’s trying to do. It combines this info with its wider understanding of the world gleaned from filling in the video images, and eventually may integrate many other sources as well.

The approach is already yielding results, James said: “Normally, it can sometimes take hundreds of demos to perform a new task, whereas now we can give a handful of demos, maybe 10, and it can perform the task.”

Image Credits: TechCrunch

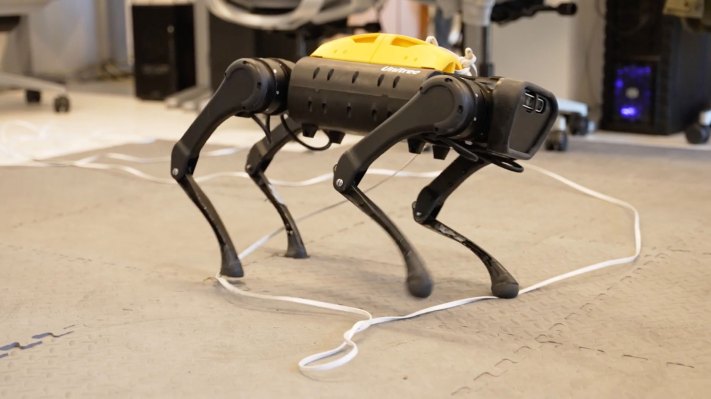

Alejandro Escontrela specializes in designing models that extract relevant data from YouTube videos, such as movements by animals, people or other robots. The robot uses these models to inform its own behavior, judging whether a given movement seems like something it should be trying out.

Ultimately it attempts to replicate movements from the videos such that another model watching them can’t tell whether it’s a robot or a real German shepherd chasing that ball.

Interestingly, many robots like this learn first in a simulation environment, testing out movements essentially in VR. But as Danijar Hafner explains, the processes have gotten efficient enough that they can skip that test, letting the robot romp in the real world and learn live from interactions like walking, tripping and of course being pushed. The advantage here is that it can learn while working rather than having to go back to the simulator to integrate new information, further simplifying the task.

“I think the holy grail of robot learning is to learn as much as you can in the real world, and as quickly as you can,” Hafner said. They certainly seem to be moving toward that goal. Check out the full video of the team’s work here.