Google’s Gradient backs Send AI to help enterprises extract data from complex documents | TechCrunch

A fledgling Dutch startup wants to help companies extra data from large volumes of complex documents where accuracy and security is paramount — and it has just secured the backing of Google’s Gradient Ventures to do so.

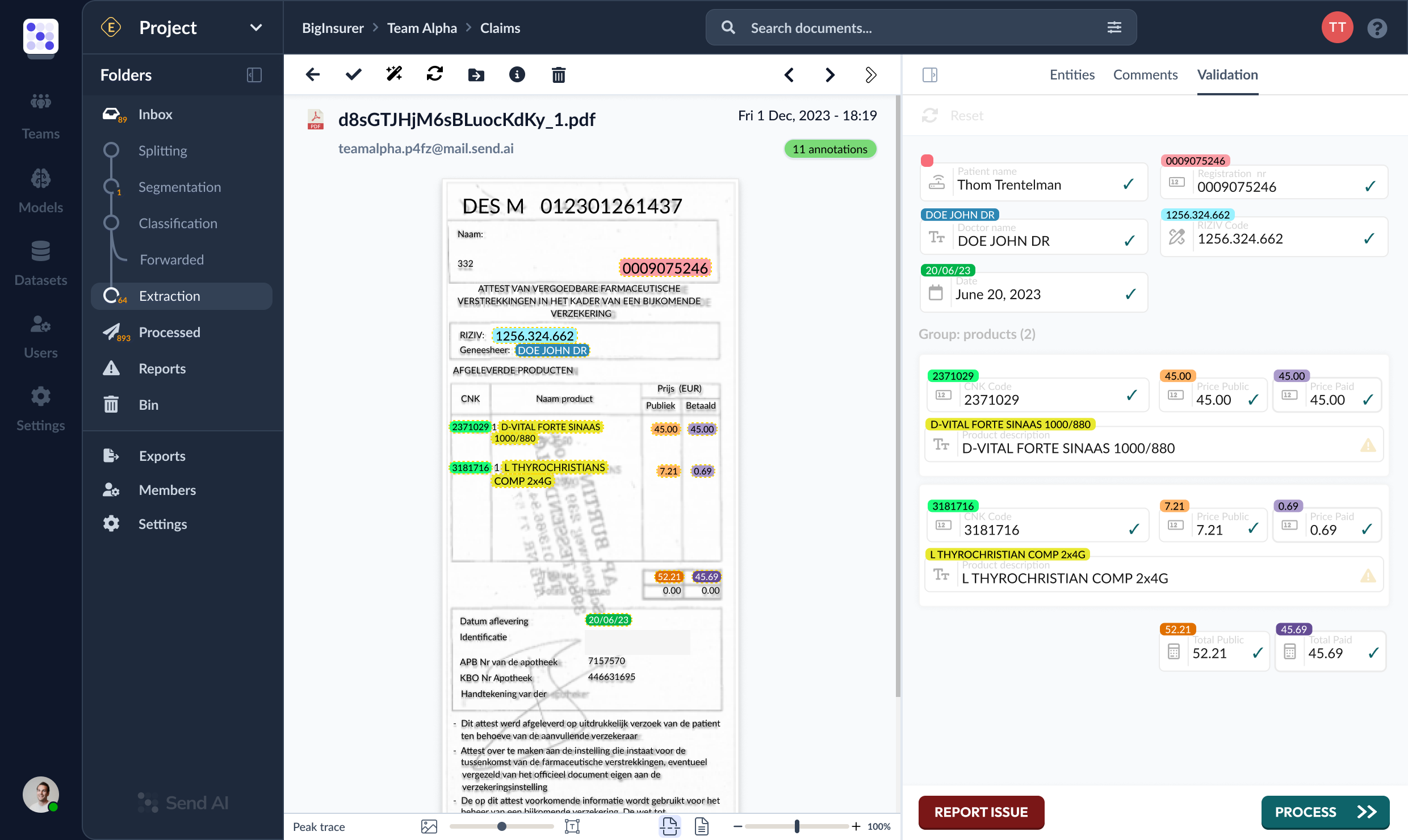

Send AI, as the startup is called, is taking on established incumbents in the document processing space such as UiPath, Abbyy, Rossum, and Kofax, with a customizable platform that allows companies to fine-tune AI models for their own individual data-extraction needs.

For instance, a company operating in a highly regulated industry such as insurance will likely have to process myriad formats, from PDFs and paper files to smartphone photos snapped with all manner of orientations and background “noise.” Such non-standard “unstructured” data types can be tricky enough for humans to parse, but an entirely machine-led approach can lead to erroneous claim rejections or reimbursements and administrative headaches down the line.

Indeed, typical off-the-shelf document processing software is often designed for more common document types that intersect with multiple industries, making them unsuitable for certain use-cases. With Send AI, on the other hand, companies can train a computer vision model to recognize specific documents, and a separate language model to extract and validate the relevant data — with humans looped-in if it’s in any doubt, to control and review each step through a web interface.

“This validation can be as simple as checking whether an expected number is really a number, or a more sophisticated lookup of a registration number in a database to see whether there’s a match,” Send AI founder and CEO Thom Trentelman told TechCrunch. “Any insecurities will be reported for human review.”

Founded out of Amsterdam in 2021 initially as Autopilot, Send AI previously raised a small $100,000 investment from a university graduate alumni fund, but as it starts to ramp things up, it has now raised a further €2.2 million ($2.4 million) in a pre-seed round of funding co-led by Google’s Gradient Ventures and Keen Venture Partners, with participation from a number of angels stemming from companies such as DeepMind.

How it works

Companies can access Send AI’s cloud-based software via APIs which funnels data from documents sent over email. Upon receipt, Send AI visually enhances the documents before sending to its language models for classification and extraction.

In terms of target market, Trentelman says that the company is substantively targeting larger enterprises, as they “struggle with documents the most,” though in truth any business that processes large volumes of documents could find a use for the technology

Image Credits Send AI: Data extraction

It perhaps goes without saying that besides the slew of existing document-processing tools that are already on the market, Send AI is up against a new breed of startups selling services built on powerful new large language models (LLMs) such as OpenAI is doing with GPT-X (which powers ChatGPT). But while Trentelman concedes that such products work great for situations that require a “subjectively good” score such as summarization or answering questions, where a high-degree of accuracy is needed across large document volumes, it’s a different story.

“You will hit walls with these technologies sooner than later — big, generic LLMs are still unpredictable, slow, and expensive,” Trentelman said. “At Send AI, we let the customer build their own solution.”

Under the hood, Send AI is built on smaller, open source models which the customer trains first by processing a small set of documents by hand, after which it’s rinse-and-repeat on new documents with humans on-hand to provide corrections.

In terms of pricing, Send AI charges on a credit-based basic, whereby customers pay per processing-step. “This way, we can differentiate between processing a 50-page PDF or just a single-text snippet,” Trentelman said. “Our models are cheap, fast, and reliable, so we can deploy them on a per-customer basis. This way, customers are in control of their data and performance, which is why we do well in regulated industries such as health insurance and government.”

Control

Send AI claims that its technology will appeal to highly-regulated industries due to the control it gives to customers over their data, which might seem counterintuitive given that it’s all cloud-based. However, Trentelman points to how a typical LLM from the likes of OpenAI works, vis à vis the way it might blend training data from multiple different customers into a single model, which raises the potential of sensitive data leakage. This is precisely why we’ve seen a slew of startups emerge with the promise of protecting private data within LLM-powered software.

Send AI attempts to address such concerns by deploying small, isolated open source transformer models for each customer.

“We use a variety of them to get the job done — out of the box they don’t impress much, but once trained on high quality data, they become powerful and precise,” Trentelman said.

So while the models and associated training data do still live on Send AI’s cloud, using isolated models means that it can pinpoint exactly where the data lives and thus delete it on request. This, according to Trentelman, is enough to make it a “preferred candidate” over other providers, and it goes some way toward convincing data privacy-focused companies that on-premise deployments aren’t their only option.

“Nowadays, more regulated companies allow suppliers to use public cloud, as long as they comply with an extensive list of regulations,” Trentelman said. “Upfront we have always gotten the question whether we could deploy on-premise, but eventually all but one company went with our public cloud offering.”

For now, Send AI is operating in private beta mode, though it already claims some impressive customers including insurance giant Axa. With a team of seven today, the company plans to use its fresh cash injection to double its headcount throughout the year ahead of a full commercial launch.